CyberShake Code Base

This page details all the pieces of code which make up the CyberShake code base, as of November 2017. Note that this does not include the workflow middleware, or the workflow generators; that code is detailed at CyberShake Workflow Framework.

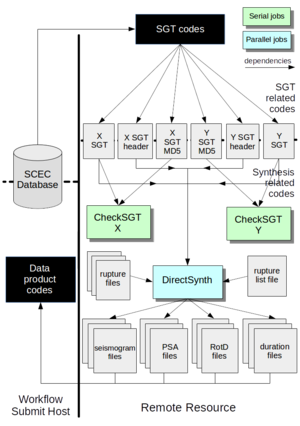

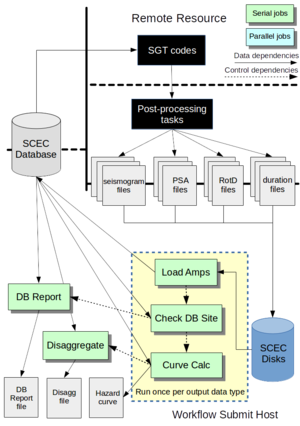

Conceptually, we can divide up the CyberShake codes into three categories:

- Strain Green Tensor-related codes: These codes produce the input files needed to generate SGTs, actually calculate the SGTs, and do some reformatting and sanity checks on the results.

- Synthesis-related codes: These codes take the SGTs and perform seismogram synthesis and intensity measure calculations.

- Data product codes: These codes insert the results into the database, and use the database to generate a variety of output data products.

Below is a description of each piece of software we use, organized by these categories. For each piece of software, we include a description of where it is located, how to compile and use it, and what its inputs and outputs are. At the end, we provide a description of input and output files and formats.

Contents

- 1 Code Installation

- 2 SGT-related codes

- 3 PP-related codes

- 4 Data Product Codes

- 5 Stochastic codes

- 6 File types

- 6.1 Modelbox

- 6.2 Gridfile

- 6.3 Gridout

- 6.4 Params

- 6.5 Coord

- 6.6 Bounds

- 6.7 Velocity files

- 6.8 Fdloc

- 6.9 Faultlist

- 6.10 Radiusfile

- 6.11 SGT Coordinate files

- 6.12 Impulse source descriptions

- 6.13 IN3D

- 6.14 AWP SGT

- 6.15 RWG SGT

- 6.16 SGT header file

- 6.17 Velocity Info file

- 6.18 BBP 1D Velocity file

- 6.19 Local VM file

- 6.20 Missing variations file

- 6.21 DB Report file

- 6.22 Hazard Curve

- 6.23 Disaggregation file

- 7 Dependencies

Code Installation

Historically, we have selected a root directory for CyberShake, then created the subdirectories 'software' for all the code, 'ruptures' for the rupture files, 'logs' for log files, and 'utils' for workflow tools. This is typically set up in unpurged storage space, so once installed purging isn't a worry. Each code listed below, along with the configuration file, should be checked out into the 'software' subdirectory.

In terms of compilers, you should use the GNU compilers unless specifically directed otherwise.

Most of the codes below contain a main directory. Inside that is a bin directory, with binaries; a src directory with code requiring compilation; and wrappers, in the main directory.

Configuration file

Many CyberShake codes use a configuration file, which specifies the root directory for the CyberShake installation, the command use to start an MPI executable, paths to a tmp and scratch space (which can be the same), and the path to the CyberShake rupture directory. We have done this instead of environment variables because it's more transparent and easier for multiple users. Both of these files should be stored in the 'software' subdirectory.

The configuration file is available at:

http://source.usc.edu/svn/cybershake/import/trunk/cybershake.cfg

Obviously, this file must be edited to be correct for the install. Since this is just a file, you will probably need to get it using 'svn export' instead of 'svn checkout'.

The keys that CyberShake currently expects to find are:

- CS_PATH = /path/to/CyberShake/software/directory

- SCRATCH_PATH = /path/to/shared/scratch

- TMP_PATH = /path/to/tmp (can be node-local, or shared with scratch

- RUPTURE_PATH = /path/to/CyberShake/rupture/directory

- MPI_CMD = ibrun or aprun or mpiexec

- LOG_PATH = /path/to/CyberShake/logs/directory

Additionally, you must check out a Python script which is used to read in the configuration file and deliver it as key-value pairs, located here:

http://source.usc.edu/svn/cybershake/import/trunk/config.py

Several CyberShake codes import config, then use it to read out the cybershake.cfg file.

Compiler file

A long time ago, Gideon Juve created a compiler file, Compilers.mk, which contains information about which compilers should be used for which system. This file should also be downloaded using 'svn export' and installed in the software directory, from

http://source.usc.edu/svn/cybershake/import/trunk/Compilers.mk

Some of the makefiles reference this file. This can - and should - be updated to reflect new systems.

PreCVM

This code stands for "Pre-Community-Velocity-Model". It has to be run before the UCVM codes, since it generates input files required by UCVM.

Purpose: To determine the simulation volume for a particular CyberShake site.

Detailed description: PreCVM queries the CyberShake database to determine all of the ruptures which fall within a given cutoff for a certain site. From that information, padding is added around the edges to construct the CyberShake simulation volume for this site. Additional padding so the X and Y dimensions are multiples of 10, 20, or 40 might also be applied, depending on the input parameters. Using this volume, both the X/Y offset of each grid point, and then the latitude and longitude using a great circle projection, are determined and written to output files.

Needs to be changed if:

- The CyberShake volume depth needs to be changed, so as to have the right number of grid points. That is set in the genGrid() function in GenGrid_py/gen_grid.py, in km.

- X and Y padding needs to be altered. That is set using 'bound_pad' in Modelbox/get_modelbox.py, around line 70.

- The rotation of the simulation volume needs to be changed. That is set using 'model_rot' in Modelbox/get_modelbox.py, around line 70.

- The database access parameters have changed. That's in Modelbox/get_modelbox.py, around line 80.

- The divisibility needs for GPU simulations change (currently, we need the dimensions to be evenly divisible by the number of GPUs used in that dimension. That is in Modelbox/get_modelbox.py, around line 250.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/PreCVM/

Author: Rob Graves, wrapped by Scott Callaghan

Dependencies: Getpar, MySQLdb for Python

Executable chain:

pre_cvm.py

Modelbox/get_modelbox.py

Modelbox/bin/gcproj

GenGrid_py/gen_grid.py

GenGrid_py/bin/gen_model_cords

Compile instructions:Run 'make' in the Modelbox/src and the GenGrid_py/src directories.

Usage:

Usage: pre_cvm.py [options]

Options:

-h, --help show this help message and exit

--site=SITE Site name

--erf_id=ERF_ID ERF ID

--modelbox=MODELBOX Path to modelbox file (output)

--gridfile=GRIDFILE Path to gridfile (output)

--gridout=GRIDOUT Path to gridout (output)

--coordfile=COORDSFILE

Path to coorfile (output)

--paramsfile=PARAMSFILE

Path to paramsfile (output)

--boundsfile=BOUNDSFILE

Path to boundsfile (output)

--frequency=FREQUENCY

Frequency

--gpu Use GPU box settings.

--spacing=SPACING Override default spacing with this value.

--server=SERVER Address of server to query in creating modelbox,

default is focal.usc.edu.

Typical run configuration: Serial; requires 6 minutes for 100m spacing, 10 billion point volume

Input files: None; inputs are retrieved from the database

Output files: modelbox, gridfile, gridout, params, coord, bounds

UCVM

Purpose: To generate a populated velocity mesh for a CyberShake simulation volume.

Detailed description: UCVM takes the volume defined by PreCVM and queries the UCVM software, using the C API, to populate the volume. The resulting mesh is then checked for Vp/Vs ratio, minimum Vp/Vs/rho, and for no Infs or NaNs. The data is outputted in either Graves (RWG) format or AWP format. This code also produces log files, which will be written to the CyberShake logs directory/GenLog/site/v_mpi-<processor number>.log. This can be useful if there's an error and you aren't sure why.

Needs to be changed if:

- New velocity models are added. Velocity models are specified in the DAX and passed through the wrapper scripts into the C code and then ultimately to UCVM, so an if statement must be added to around line 250 (and around line 450 if it's applicable for no GTL).

- The backend UCVM substantially changes. If we move to the Python implementation, for example.

- If additional models are added, new libraries may need to be added to the makefile.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/UCVM

Author: Scott Callaghan

Executable chain:

single_exe.py

single_exe.csh

bin/ucvm-single-mpi

Compile instructions:The makefile needs to be edited so that "UCVM_HOME" points to the UCVM home directory. Then run 'make' in the UCVM/src directory.

Usage:

All of site, gridout, modelcords, models, and format must be specified.

Usage: single_exe.py [options]

Options:

-h, --help show this help message and exit

--site=SITE Site name

--gridout=GRIDOUT Path to gridout (output)

--coordfile=COORDSFILE

Path to coordfile (output)

--models=MODELS Comma-separated string on velocity models to use.

--format=FORMAT Specify awp or rwg format for output.

--frequency=FREQUENCY

Frequency

--spacing=SPACING Override default spacing with this value (km)

--min_vs=MIN_VS Override minimum Vs value. Minimum Vp and minimum

density will be 3.4 times this value.

Typical run configuration: Parallel on ~4000 cores; for 10 billion points and the C version of UCVM, takes about 20 minutes. Typically only half the cores per node are used to get more memory per process.

Output files: either RWG format or AWP format, depending on the option selected.

Smoothing

Purpose: To smooth a velocity file along model interfaces.

Detailed description: The smoothing code takes in a velocity mesh, determines the surface coordinates of the interfaces between velocity models, gets a list of all the points which need to be smoothed, and then performs the smoothing by averaging in both the X and Y direction for a user-specified number of points (default of 10km in each direction).

Needs to be changed if:

- We change our version of UCVM. The LD_LIBRARY_PATH needs to be modified, in run_smoothing.py around line 98.

- The smoothing algorithm is modified. Currently that is specified in the average_point() function in smooth_mpi.c.

- We start using velocity models with boundaries aren't perpendicular to the earth's surface.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/UCVM/smoothing

Author: Scott Callaghan

Dependencies: UCVM

Executable chain:

smoothing/run_smoothing.py bin/determine_surface_model smoothing/determine_smoothing_points.py smoothing/smooth_mpi

Compile instructions:Run 'make' in the smoothing directory, and make sure that determine_surface_model has been compiled in the UCVM/src directory. You may need to change the compiler; currently it uses 'cc'.

Usage:

Usage: run_smoothing.py [options]

Options:

-h, --help show this help message and exit

--gridout=GRIDOUT gridout file

--coords=COORDS coords file

--models=MODELSTRING comma-separated list of velocity models

--smoothing-dist=SMOOTHING_DIST

Number of grid points to smooth over. About 10km of

grid points is a good starting place.

--mesh=MESH AWP-format velocity mesh to smooth

--mesh-out=MESH_OUT Output smoothed mesh

Typical run configuration: Parallel on ~1500 cores; for 5 billion points and the C version of UCVM, takes about 16 minutes.

Input files: AWP format velocity file, gridout, coord

Output files: AWP format smoothed velocity file.

PreSGT

Purpose: To generate a series of input files which are used by the wave propagation codes.

Detailed description: PreSGT determines the X and Y coordinates of the site location (where the impulse will go for the wave propagation simulation) and determines, which mesh point (X and Y) maps most closely to every point on a fault surface which is within the cutoff. That information is combined with an adaptive mesh approach to create a list of all the points for which SGTs should be saved.

Needs to be changed if:

- We change our approach for saving adaptive mesh points.

- We change the location of the rupture geometry files, currently assumed to be <rupture root>/Ruptures_erf<erf ID> . This is specified in presgt.py, line 167.

- The directory hierarchy and naming scheme for rupture geometry files, currently <src id>/<rup id>/<src id>_<rup_id>.txt, changes. This is specified in faultlist_py/CreateFaultList.py, line 36.

- The number of header lines in the rupture geometry file changes. This would require changing the nheader value, currently 6, specified in faultlist_py/CreateFaultList.py, line 36.

- We switch to RSQSim ruptures, or other ruptures in which the geometry isn't planar. Modifications would be required to gen_sgtgrid.c.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/PreSgt

Author: Rob Graves, heavily modified by Scott Callaghan

Dependencies: Getpar, libcfu, MySQLdb for Python

Executable chain:

presgt.py faultlist_py/CreateFaultList.py bin/gen_sgtgrid

Compile instructions:Run 'make' in the src directory.

Usage:

Usage: ./presgt.py <site> <erf_id> <modelbox> <gridout> <model_coords> <fdloc> <faultlist> <radiusfile> <sgtcords> <spacing> [frequency] Example: ./presgt.py USC 33 USC.modelbox gridout_USC model_coords_GC_USC USC.fdloc USC.faultlist USC.radiusfile USC.cordfile 200.0 0.1

Typical run configuration: Parallel on 8 nodes, 32 cores (gen_sgtgrid is a parallel code); for 200m spacing UCERF2, takes about 8 minutes.

Input files: modelbox, gridout, coord

Output files: fdloc, faultlist, radiusfile, sgtcoords.

PreAWP

Purpose: To generate input files in a format that AWP-ODC expects.

Detailed description: PreAWP performs a number of steps:

- An IN3D parameter file is produced, needed for AWP-ODC.

- A file with the SGT coordinates to save in AWP format is produced. Since RWG and AWP use different coordinate systems, a coordinate transformation (X->Y, Y->X, zero-indexing->one-indexing) is performed on the SGT coordinates file.

- The velocity file in translated to AWP format, if it isn't in AWP format already.

- The correct source, based on the dt and nt, is selected. The source must be generated manually ahead of time. Details about source generation are given here.

- Striping for the output file is also set up here.

- Files are symlinked into the directory structure that AWP expects. Note that slightly different versions of this exist for the CPU and GPU implementations of AWP-ODC-SGT.

Needs to be changed if:

- The path to the Lustre striping command (lfs) changes. This path is hard-coded in build_awp_inputs.py, line 14. Note that this is the path to lfs on the compute node, NOT the login node.

- The AWP code changes its input format.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/AWP-GPU-SGT/utils/ (GPU) or http://source.usc.edu/svn/cybershake/import/trunk/AWP-ODC-SGT/utils/ (CPU), AND also http://source.usc.edu/svn/cybershake/import/trunk/SgtHead

Author: Scott Callaghan

Dependencies: SgtHead

Executable chain:

build_awp_inputs.py

build_IN3D.py

build_src.py

build_cordfile.py

SgtHead/gen_awp_cordfile.py

build_media.py

SgtHead/bin/reformat_velocity

Compile instructions:Run 'make' in the SgtHead/src directory.

Usage:

Usage: build_awp_inputs.py [options]

Options:

-h, --help show this help message and exit

--site=SITE Site name

--gridout=GRIDOUT Path to gridout input file

--fdloc=FDLOC Path to fdloc input file

--cordfile=CORDFILE Path to cordfile input file

--velocity-prefix=VEL_PREFIX

RWG velocity prefix. If omitted, will not reformat

velocity file, just symlink.

--frequency=FREQUENCY

Frequency of SGT run, 0.5 Hz by default.

--px=PX Number of processors in X-direction.

--py=PY Number of processors in Y-direction.

--pz=PZ Number of processors in Z-direction.

--source-frequency=SOURCE_FREQ

Low-pass filter frequency to use on the source,

default is same frequency as the frequency of the run.

--spacing=SPACING Override default spacing, derived from frequency.

--velocity-mesh=VEL_MESH

Provide path to velocity mesh. If omitted, will

assume mesh is named awp.<site>.media.

Typical run configuration: Serial; for 1 Hz run, takes about 11 minutes.

Input files: gridout, fdloc, cordfile, velocity mesh (if in RWG format, will be converted to AWP), RWG source

Output files: IN3D, AWP source, AWP velocity mesh, AWP cordfile.

AWP-ODC-SGT, CPU version

Purpose: To perform SGT synthesis

Detailed description: AWP-ODC-SGT is the CPU version. It uses the IN3D file for its parameters.

Needs to be changed if:

- New science or features are added to the AWP code.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/AWP-ODC-SGT

Author: Kim Olsen, Steve Day, Yifeng Cui, various students and post-docs, wrapped by Scott Callaghan

Dependencies: iobuf module

Executable chain:

awp_odc_wrapper.sh bin/pmcl3d

Compile instructions:Using the GNU compilers, run 'make' in the src directory.

Usage:

pmcl3d <IN3D parameter file>

Typical run configuration: Parallel; for 0.5 Hz run (2 billion points, 20k timesteps), takes about 45 minutes on 10,000 cores.

Input files: IN3D, AWP cordfile, AWP velocity mesh), AWP source

Output files: AWP SGT file.

AWP-ODC-SGT, GPU version

Purpose: To perform SGT synthesis

Detailed description: AWP-ODC-SGT is the GPU version. It takes parameters on the command-line, so the wrapper converts the IN3D file into command-line arguments and invokes it.

Needs to be changed if:

- New science or features are added to the AWP code.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/AWP-GPU-SGT

Author: Kim Olsen, Steve Day, Yifeng Cui, various students and post-docs, wrapped by Scott Callaghan

Dependencies: CUDA toolkit module

Executable chain:

gpu_wrapper.py bin/pmcl3d

Compile instructions:modules PrgEnv-gnu and module cudatoolkit must be loaded first. Then, run 'make' in the src directory.

Usage:

Usage: ./pmcl3d Options: [(-T | --TMAX) <TMAX>] [(-H | --DH) <DH>] [(-t | --DT) <DT>] [(-A | --ARBC) <ARBC>] [(-P | --PHT) <PHT>] [(-M | --NPC) <NPC>] [(-D | --ND) <ND>] [(-S | --NSRC) <NSRC>] [(-N | --NST) <NST>] [(-V | --NVE) <NVE>] [(-B | --MEDIASTART) <MEDIASTART>] [(-n | --NVAR) <NVAR>] [(-I | --IFAULT) <IFAULT>] [(-R | --READ_STEP) <x READ_STEP] [(-X | --NX) <x length] [(-Y | --NY) <y length>] [(-Z | --NZ) <z length] [(-x | --NPX) <x processors] [(-y | --NPY) <y processors>] [(-z | --NPZ) <z processors>] [(-1 | --NBGX) <starting point to record in X>] [(-2 | --NEDX) <ending point to record in X>] [(-3 | --NSKPX) <skipping points to record in X>] [(-11 | --NBGY) <starting point to record in Y>] [(-12 | --NEDY) <ending point to record in Y>] [(-13 | --NSKPY) <skipping points to record in Y>] [(-21 | --NBGZ) <starting point to record in Z>] [(-22 | --NEDZ) <ending point to record in Z>] [(-23 | --NSKPZ) <skipping points to record in Z>] [(-i | --IDYNA) <i IDYNA>] [(-s | --SoCalQ) <s SoCalQ>] [(-l | --FL) <l FL>] [(-h | --FH) <i FH>] [(-p | --FP) <p FP>] [(-r | --NTISKP) <time skipping in writing>] [(-W | --WRITE_STEP) <time aggregation in writing>] [(-100 | --INSRC) <source file>] [(-101 | --INVEL) <mesh file>] [(-o | --OUT) <output file>] [(-c | --CHKFILE) <checkpoint file to write statistics>] [(-G | --IGREEN) <IGREEN for SGT>] [(-200 | --NTISKP_SGT) <NTISKP for SGT>] [(-201 | --INSGT) <SGT input file>]

Typical run configuration: Parallel; for 1 Hz run (10 billion points, 40k timesteps), takes about 55 minutes on 800 GPUs.

Input files: IN3D, AWP cordfile, AWP velocity mesh), AWP source

Output files: AWP SGT file.

PostAWP

Purpose: To prepare the AWP results for use in post-processing.

Detailed description: PostAWP prepares the outputs of AWP so that they can be used with the RWG-authored post-processing code. Specifically, it undoes the AWP coordinate transformation and reformats the AWP output files into the SGT component order expected by RWG (XX->YY, YY->XX, XZ->-YZ, YZ->-XZ, and all SGTs are doubled if we are calculating the Z-component), creates separate SGT header files, and calculates MD5 sums on the SGT files. Calculating the header information requires a number of input files, since lambda, mu, and the location of the impulse must all be included. The MD5 sums can be calculated separately, using the MD5 wrapper RunMD5sum.

Needs to be changed if:

- The AWP code is modified to produce outputs in exactly RWG order

- The header format for the post-processing code changes

- We decide not to calculate MD5 sums

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/AWP-GPU-SGT/utils/prepare_for_pp.py (this will work for the CPU version of AWP also, despite the path); http://source.usc.edu/svn/cybershake/import/trunk/software/SgtHead

Author: Scott Callaghan

Dependencies: Getpar

Executable chain:

AWP-GPU-SGT/utils/prepare_for_pp.py SgtHead/bin/reformat_awp_mpi SgtHead/bin/write_head

Compile instructions:Run 'make write_head' and 'make reformat_awp_mpi' in the SgtHead/src directory.

Usage:

Usage: ./prepare_for_pp.py <site> <AWP SGT> <reformatted SGT filename> <modelbox file> <rwg cordfile> <fdloc file> <gridout file> <IN3D file> <AWP media file> <component> <run_id> <header> [frequency]

Typical run configuration: Parallel, 4 processors on 2 nodes; for a 750 GB SGT, takes about 100 minutes without the MD5 sums.

Input files: AWP SGT file, modelbox, RWG cordfile), fdloc, IN3D, AWP velocity mesh

Output files: RWG SGT file, SGT header file

RunMD5sum

Purpose: Wrapper for performing MD5sums.

Detailed description: On Titan, we ran into wallclock issues when bundling the MD5sums along with PostAWP. This wrapper supports performing the MD5 sums separately.

Needs to be changed if:

- We change hash algorithms

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/SgtHead/run_md5sum.sh

Author: Scott Callaghan

Dependencies: none

Executable chain:

run_md5sum.sh

Compile instructions: none

Usage:

Usage: ./run_md5sum.sh <file>

Typical run configuration: Serial; for a 750 GB SGT, takes about 70 minutes.

Input files: RWG SGT file

Output files: MD5sum, with filename <RWG SGT filename>.md5

NanCheck

Purpose: Check the SGTs for anomalies before the post-processing.

Detailed description: This code checks to be sure the SGTs are the expected size, then checks for NaNs or too many consecutive zeros in the SGT files.

Needs to be changed if:

- We change the number of timesteps in the SGT file. Currently this is hardcoded, but it should be a command-line parameter.

- We want to add additional checks.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/SgtTest/

Author: Rob Graves, Scott Callaghan

Dependencies: Getpar

Executable chain:

perform_checks.py bin/check_for_nans

Compile instructions: Run 'make' in SgtTest/src .

Usage:

Usage: ./perform_checks.py <SGT file> <SGT header file>

Typical run configuration: Serial; for a 750 GB SGT, takes about 45 minutes.

Input files: AWP SGT file, RWG coordinate file, IN3D file

Output files: none

The following codes are related to the post-processing part of the workflow.

CheckSgt

Purpose: To check the MD5 sums of the SGT files to be sure they match.

Detailed description: CheckSgt takes the SGT files and their corresponding MD5 sums and checks for agreement.

Needs to be changed if:

- We change hashing algorithms.

- We decide to add additional sanity checks to the beginning of the post-processing.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/CheckSgt

Author: Scott Callaghan

Dependencies: none

Executable chain:

CheckSgt.py

Compile instructions: none

Usage:

Usage: ./CheckSgt.py <sgt file> <md5 file>

Typical run configuration: Serial; for a 750 GB SGT, takes about 90 minutes.

Input files: RWG SGT, SGT MD5 sums

Output files: None

DirectSynth

DirectSynth is the code we currently use to perform the post-processing. For historical reasons, all of the codes used for CyberShake post-processing are documented here: CyberShake post-processing options (login required).

Purpose: To perform reciprocity calculations and produce seismograms, intensity measures, and duration measures.

Detailed description: DirectSynth reads in the SGTs across a group of processes, and hands out tasks (synthesis jobs) to worker processes. These worker processes read in rupture geometry information from disk and call the RupGen-api to generate full slip histories in memory. The workers request SGTs from the reader processes over MPI. X and Y component PSA calculations are performed from the resultant seismograms, and RotD and duration calculations are also performed, if requested. More details about the approach used are available at DirectSynth.

Needs to be changed if:

- We have new intensity measures or other calculations per seismogram to perform.

- We decide to change the post-processing algorithm.

- The wrapper needs to be modified if we want to set different custom environment variables.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/DirectSynth

Author: Scott Callaghan, original seismogram synthesis code by Rob Graves, X and Y component PSA code by David Okaya, RotD code by Christine Goulet

Dependencies: Getpar, libcfu, [[CyberShake Code Base#RupGen-api-v3.3.1 | RupGen-api-v3.3.1, FFTW, libmemcached (optional) and memcached (optional)

Executable chain:

direct_synth_v3.3.1.py (current version, uses the Graves & Pitarka (2014) rupture generator)

utils/pegasus_wrappers/invoke_memcached.sh

memcached

bin/direct_synth

Compile instructions:

- Compile RupGen-api first.

- Edit the makefile in DirectSynth/src . Check the following variables:

- BASE_DIR should point to the top-level CyberShake install directory

- LIBCFU should point to the libcfu install directory

- V3_3_1_RG_LIB should point to the RupGen-api-3.3.1/lib directory

- LDLIBS should have the correct paths to the libcfu and libmemcached lib directories

- V3_3_1_RG_INC should point to the RupGen-api-3.3.1/include directory

- IFLAGS should have the correct paths to the libcfu and libmemcached include directories

- Run 'make direct_synth_v3.3.1' in DirectSynth/src.

You will also need to edit the hard-coded paths to memcached in direct_synth_v3.3.1.py, in lines 15 and 24.

Usage:

direct_synth_v3.3.1.py stat=<site short name> slat=<site lat> slon=<site lon> run_id=<run id> sgt_handlers=<number of SGT handler processes; must be enough for the SGTs to be read into memory> debug=<print logs for each process; 1 is yes, 0 no> max_buf_mb=<buffer size in MB for each worker to use for storing SGT information> rupture_spacing=<'uniform' or 'random' hypocenter spacing> ntout=<nt for seismograms> dtout=<dt for seismograms> rup_list_file=<input file containing ruptures to process> sgt_xfile=<input SGT X file> sgt_yfile=<input SGT Y file> x_header=<input SGT X header> y_header=<input SGT Y header> det_max_freq=<maximum frequency of deterministic part> stoch_max_freq=<maximum frequency of stochastic part> run_psa=<'1' to run X and Y component PSA, '0' to not> run_rotd=<'1' to run RotD calculations, '0' to not> run_durations=<'1' to run duration calculation, '0' to not> simulation_out_pointsX=<'2', the number of components> simulation_out_pointsY=1 simulation_out_timesamples=<same as ntout> simulation_out_timeskip=<same as dtout> surfseis_rspectra_seismogram_units=cmpersec surfseis_rspectra_output_units=cmpersec2 surfseis_rspectra_output_type=aa surfseis_rspectra_period=all surfseis_rspectra_apply_filter_highHZ=<high filter, 5.0 for 1 Hz runs, 20.0 or higher for 10 Hz runs> surfseis_rspectra_apply_byteswap=no

Typical run configuration: Parallel, typically on 3840 processors; for 750 GB SGTs with ~7000 ruptures, takes about 12 hours.

Input files: RWG SGT, SGT headers, rupture list file, rupture geometry files

Output files: Seismograms, PSA files, RotD files, Duration files

Data Product Codes

The software in this section takes the data products produced by the SGT and post-processing stages, adds some of it to the database, and creates final data products. Note that all these codes should be installed on a server close to the database, to reduce insertion and query time. Currently these are all installed on SCEC disks and accessed from shock.usc.edu.

Load Amps

Purpose: Load data from output files into the database.

Detailed description: This code loads either PSA, RotD, or Duration data into the database, depending on command-line options. It also performs sanity checks on the PSA data being inserted: values must be between 0.008 and 8400 cm/s2. If they are less than 0.008, some will still be passed through if it's a small magnitude event at large distances. If this constraint is violated, it will abort. Note that if LoadAmps needs to be rerun, sometimes the database must be cleaned out first, as data from the previous attempt may have inserted successfully and will cause duplicate key errors if you try to insert the same data again.

Needs to be changed if:

- We change the sanity checks on data inserts.

- We modify the format of the PSA, RotD, or Duration files.

- We add new types of data to insert.

- We change the database schema.

- We add a new server. To add a new server, in addition to providing a command-line option for it, you will need to create a Hibernate config file. You can start with moment.cfg.xml or focal.cfg.xml and edit lines 7-16 appropriately.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/CyberCommands

Author: Joshua Garcia, Scott Callaghan

Dependencies: LoadAmps calls CyberCommands, a Java code with a long list of dependencies (all of these are checked into the Java project):

- Ant

- Apache Commons

- Hibernate

- MySQL bindings

- Xerces

- DOM4J

- Log4J

- Java 1.6+

Executable chain:

insert_dir.sh

CyberLoadAmps_SC

cybercommands_SC.jar

CyberLoadamps.java

Compile instructions: Check out CyberCommands into Eclipse. Create the cybercommands_SC.jar file using Eclipse's JAR build framework and the cybercommands_SC.jardesc description file. Install cybercommand_SC.jar and the required JAR files on the server. Point insert_dir.sh to CyberLoadAmps_SC to cybercommands_SC.jar.

Usage:

Usage: CyberLoadAmps [-r | -d | -z | -u][-c] [-d] [-periods periods] [-run RunID] [-p directory] [-server name] [-z] [-help] [-i insertion_values]

[-u] [-f]

-i <insertion_values> Which values to insert -

gm: geometric mean PSA data (default)

xy: X and Y component PSA data

gmxy: Geometric mean and X and Y components

-run <RunID> Run ID - this option is required

-p <directory> file path with spectral acceleration files,

either top-level directory or zip file - this option is required

-server <name> server name (focal, surface, intensity, moment,

or csep-x) - this option is required

-periods <periods> Comma-delimited periods to insert

-c Convert values from g to cm/sec^2

-d Assume one BSA file per rupture, with embedded

header information.

-f Don't apply value checks to insertion values; use

with care!.

-help print this message

-r Read rotd files (instead of bsa.)

-u Read duration files (instead of bsa.)

-z Read zip files instead of bsa.

Typical run configuration: Serial; for 5 periods, takes about 10 minutes. It's wildly dependent on the database and contention from other database processes.

Input files: PSA files, RotD files, Duration files

Output files: none

Check DB Site

Purpose: Verify that data was correctly loaded into the database.

Detailed description: This code takes a list of components (or type IDs) to check for a run ID, and verifies that there is one entry for every rupture variation. If some rupture variations are missing, a file is produced which lists the missing source, rupture, rupture variation tuples.

Needs to be changed if:

- Username, password, or database host are changed.

- We change the database schema.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/db/CheckDBDataForSite.java and DBConnect.java

Author: Scott Callaghan (CheckDBDataForSite.java), Nitin Gupta, Vipin Gupta, Phil Maechling (DBConnect.java)

Dependencies: Both are checked into the CyberShake project:

- Apache Commons

- MySQL bindings

Executable chain:

check_db.sh CheckDBDataForSite.java

Compile instructions: Check out CheckDBDataForSite.java and DBConnect.java. Compile them by running 'javac -classpath mysql-connector-java-5.0.5-bin.jar:commons-cli-1.0.jar DBConnect.java CheckDBDataForSite.java'. The paths to the MySQL bindings jar and the Apache Commons jar may be different depending on your installation.

Usage:

usage: CheckDBDataForSite

-p <periods> Comma-separated list of periods to check, for geometric

and rotd.

-t <type_ids> Comma-separated list of type IDs to check, for duration.

-c <component> Component type (geometric, rotd, duration) to check.

-h,--help Print help for CheckDBDataForSite

-o <output> Path to output file, if something is missing (required).

-r <run_id> Run ID to check (required).

-s <server> DB server to query against.

Typical run configuration: Serial; typically takes just a few seconds. It's wildly dependent on the database and contention from other database processes.

Input files: none

Output files: Missing variations file

DB Report

Purpose: Produce a database report, a data product which Rob Graves used for a time.

Detailed description: This code takes a run ID, queries the database for PSA values for all components, and writes the output to a text file. The list of periods and the DB config parameters are specified in an XML config file.

Needs to be changed if:

- Username, password, or database host are changed: the DB connection parameters in default.xml would need to be edited.

- We want results for different periods: edit default.xml.

- We change the database schema.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/reports/db_report_gen.py . default.xml in the same directory is also needed, and can be generated by editing and running conf_get.py, also in the same directory.

Author: Kevin Milner

Dependencies: MySQLdb

Executable chain:

db_report_gen.py

Compile instructions: None, all code is Python.

Usage:

Usage: db_report_gen.py [options] SITE_SHORT_NAME

NOTE: defaults are loaded from defaults.xml and can be edited manually

or overridden with conf_gen.py

Options:

-h, --help show this help message and exit

-e ERF_ID, --erfID=ERF_ID

ERF ID for Report (default = none)

-f FILENAME, --file=FILENAME

Store Results to a file instead of STDOUT. If a

directory is given, a name will be auto generated.

-i, --id Flag for specifying site ID instead of Short Name

(default uses Short Name)

--hypo, --hpyocenter Flag for appending hypocenter locations to result

-l LIMIT, --limit=LIMIT

Limit the total number of rusults, or 0 for no limit

(default = 0)

-o, --sort SLOW: Force SQL Order By statement for sorting. It

will probably come out sorted, but if it doesn't, you

can use this. (default will not sort)

-p PERIODS, --periods=PERIODS

Comma separated period values (default = 3.0,5.0,10.0)

--pr, --print-runs Print run IDs for site and optionally ERF/Rup Var

Scen/SGT Var IDs

-r RUP_VAR_SCENARIO_ID, --rupVarID=RUP_VAR_SCENARIO_ID

Rupture Variation Scenario ID for Report (default =

none)

--ri=RUN_ID, --runID=RUN_ID

Allows you to specify a run ID to use (default uses

latest compatible run ID)

-R RUPTURE, --rupture=RUPTURE

Only give information on specified rupture. Must be

acompanied by -S/--source flag (default shows all

ruptures)

-s SGT_VAR_ID, --sgtVarID=SGT_VAR_ID

SGT Variation ID for Report (default = none)

-S SOURCE, --source=SOURCE

Only give information on specified source. To specify

rupture, see -R option (default shows all sources)

--s_im, --sort-ims Sort output by IM value (increasing)...may be slow!

-v, --verbose Verbosity Flag (default = False)

Typical run configuration: Serial; about 1 minute. It's wildly dependent on the database and contention from other database processes.

Input files: default.xml

Output files: DB Report file

Curve Calc

Purpose: Calculate CyberShake hazard curves alongside comparison GMPEs.

Detailed description: This code takes a run ID, component, and period, queries the database for the appropriate IM values, and calculates a hazard curve in the desired format. Comparison GMPE curves can also be plotted.

Needs to be changed if:

- Username, password, or database host are changed.

- We change the database schema.

- New IM types need to be supported.

Source code location: The CyberShake curve calculator is part of the OpenSHA codebase. The specific Java class is org.opensha.sha.cybershake.plot.HazardCurvePlotter (available via https://source.usc.edu/svn/opensha/trunk/src/org/opensha/sha/cybershake/plot/), but it has a complex set of Java depdendencies. To compile and run, you should follow the instructions on http://www.opensha.org/trac/wiki/SettingUpEclipse to access the source. The curve calculator is also wrapped by curve_plot_wrapper.sh, in http://source.usc.edu/svn/cybershake/import/trunk/HazardCurveGeneration/curve_plot_wrapper.sh .

The OpenSHA project also has configuration files for various GMPEs, config files for UCERF2, and configuration files for output formats preferred by Tom and Rob, in src/org/opensha/sha/cybershake/conf.

Author: Kevin Milner

Dependencies: Standard OpenSHA dependencies

Executable chain:

curve_plot_wrapper.sh HazardCurvePlotter.java

Compile instructions: Use the OpenSHA build process if building from source.

Usage:

usage: HazardCurvePlotter [-?] [-af <arg>] [-benchmark] [-c] [-cmp <arg>]

[-comp <arg>] [-cvmvs] [-e <arg>] [-ef <arg>] [-f] [-fvs <arg>] [-h

<arg>] [-imid <arg>] [-imt <arg>] [-n] [-novm] [-o <arg>] [-p

<arg>] [-pf <arg>] [-pl <arg>] [-R <arg>] [-r <arg>] [-s <arg>]

[-sgt <arg>] [-sgtsym] [-t <arg>] [-v <arg>] [-vel <arg>] [-w

<arg>]

-?,--help Display this message

-af,--atten-rel-file <arg> XML Attenuation Relationship

description file(s) for comparison.

Multiple files should be comma

separated

-benchmark,--benchmark-test-recalc Forces recalculation of hazard

curves to test calculation speed.

Newly recalculated curves are not

kept and the original curves are

plotted.

-c,--calc-only Only calculate and insert the

CyberShake curves, don't make plots.

If a curve already exists, it will

be skipped.

-cmp,--component <arg> Intensity measure component.

Options: GEOM,X,Y,RotD100,RotD50,

Default: GEOM

-comp,--compare-to <arg> Compare to aspecific Run ID (or

multiple IDs, comma separated)

-cvmvs,--cvm-vs30 Option to use Vs30 value from the

velocity model itself in GMPE

calculations rather than, for

example, the Wills 2006 value.

-e,--erf-id <arg> ERF ID

-ef,--erf-file <arg> XML ERF description file for

comparison

-f,--force-add Flag to add curves to db without

prompt

-fvs,--force-vs30 <arg> Option to force the given Vs30 value

to be used in GMPE calculations.

-h,--height <arg> Plot height (default = 500)

-imid,--im-type-id <arg> Intensity measure type ID. If not

supplied, will be detected from im

type/component/period parameters

-imt,--im-type <arg> Intensity measure type. Options: SA,

Default: SA

-n,--no-add Flag to not automatically calculate

curves not in the database

-novm,--no-vm-colors Disables Velocity Model coloring

-o,--output-dir <arg> Output directory

-p,--period <arg> Period(s) to calculate. Multiple

periods should be comma separated

(default: 3)

-pf,--password-file <arg> Path to a file that contains the

username and password for inserting

curves into the database. Format

should be "user:pass"

-pl,--plot-chars-file <arg> Specify the path to a plot

characteristics XML file

-R,--run-id <arg> Run ID

-r,--rv-id <arg> Rupture Variation ID

-s,--site <arg> Site short name

-sgt,--sgt-var-id <arg> STG Variation ID

-sgtsym,--sgt-colors Enables SGT specific symbols

-t,--type <arg> Plot save type. Options are png,

pdf, jpg, and txt. Multiple types

can be comma separated (default is

pdf)

-v,--vs30 <arg> Specify default Vs30 for sites with

no Vs30 data, or leave blank for

default value. Otherwise, you will

be prompted to enter vs30

interactively if needed.

-vel,--vel-model-id <arg> Velocity Model ID

-w,--width <arg> Plot width (default = 600)

Typical run configuration: Serial; about 30 seconds per curve. It's wildly dependent on the database and contention from other database processes.

Input files: ERF config file, GMPE config files

Output files: Hazard Curve

Disaggregate

Purpose: Disaggregate the curve results to determine the largest contributing sources.

Detailed description: This code takes a run ID, a probability or IM level, and a period to disaggregate at. It produces disaggregation distance-magnitude plots and also a list of the % contribution of each source.

Needs to be changed if:

- Username, password, or database host are changed.

- We change the database schema.

- We want to support different kinds of disaggregation, or for a different kind of ERF.

Source code location: The Disaggregator is part of the OpenSHA codebase. The specific Java class is org.opensha.sha.cybershake.plot.DisaggregationPlotter (available via https://source.usc.edu/svn/opensha/trunk/src/org/opensha/sha/cybershake/plot/), but it has a complex set of Java depdendencies. To compile and run, you should follow the instructions on http://www.opensha.org/trac/wiki/SettingUpEclipse to access the source. The curve calculator is also wrapped by disagg_plot_wrapper.sh, in http://source.usc.edu/svn/cybershake/import/trunk/HazardCurveGeneration/disagg_plot_wrapper.sh .

Author: Kevin Milner, Nitin Gupta, Vipin Gupta

Dependencies: Standard OpenSHA dependencies

Executable chain:

disagg_plot_wrapper.sh DisaggregationPlotter.java

Compile instructions: Use the standard OpenSHA building process if building from source.

Usage:

usage: DisaggregationPlotter [-?] [-af <arg>] [-cmp <arg>] [-e <arg>]

[-fvs <arg>] [-i <arg>] [-imid <arg>] [-imt <arg>] [-o <arg>] [-p

<arg>] [-pr <arg>] [-r <arg>] [-R <arg>] [-s <arg>] [-sgt <arg>]

[-t <arg>] [-vel <arg>]

-?,--help Display this message

-af,--atten-rel-file <arg> XML Attenuation Relationship description

file(s) for comparison. Multiple files

should be comma separated

-cmp,--component <arg> Intensity measure component. Options:

GEOM,X,Y,RotD100,RotD50, Default: GEOM

-e,--erf-id <arg> ERF ID

-fvs,--force-vs30 <arg> Option to force the given Vs30 value to be

used in GMPE calculations.

-i,--imls <arg> Intensity Measure Levels (IMLs) to

disaggregate at. Multiple IMLs should be

comma separated.

-imid,--im-type-id <arg> Intensity measure type ID. If not supplied,

will be detected from im

type/component/period parameters

-imt,--im-type <arg> Intensity measure type. Options: SA,

Default: SA

-o,--output-dir <arg> Output directory

-p,--period <arg> Period(s) to calculate. Multiple periods

should be comma separated (default: 3)

-pr,--probs <arg> Probabilities (1 year) to disaggregate at.

Multiple probabilities should be comma

separated.

-r,--rv-id <arg> Rupture Variation ID

-R,--run-id <arg> Run ID

-s,--site <arg> Site short name

-sgt,--sgt-var-id <arg> STG Variation ID

-t,--type <arg> Plot save type. Options are png, pdf, and

txt. Multiple types can be comma separated

(default is pdf)

-vel,--vel-model-id <arg> Velocity Model ID

Typical run configuration: Serial; typically takes about 30 seconds. It's wildly dependent on the database and contention from other database processes.

Input files: none

Output files: Disaggregation file

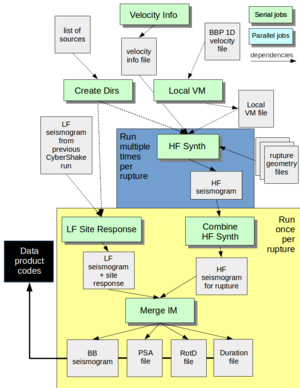

Stochastic codes

With CyberShake, we also have the option to augment a completed run with stochastic seismograms. The following codes are used to add stochastic high-frequency content to an already-completed low-frequency deterministic run.

Velocity Info

Purpose: To determine slowness-averaged VsX values for a CyberShake site, from UCVM.

Detailed description: Velocity Info takes a location, a velocity model, and grid spacing information and queries UCVM to generate three VsX values needed by the site response:

- Vs30, calculated as: 30 / sum( 1 / (Vs sampled from [0.5, 29.5] at 1 meter increments, for 30 values) )

- Vs5H, like Vs30 but calculated over the shallowest 5*gridspacing meters. So if gridspacing=100m, Vs5H = 500 / sum( 1 / (Vs sampled from [0.5, 499.5] at 1 meter increments, for 500 values) )

- VsD5H, like Vs30, but calculated over gridspacing increments, instead of 1 meter. The start and end are weighted half as much. So if gridspacing=100m, VsD5H = 5 / sum( 0 / (Vs sampled from [0, 500] at 1 meter increments, for 500 values) )

Needs to be changed if:

- We need to support more than one model - for instance, if the CyberShake site box (not simulation volume) spans multiple models. The code to parse the model string and load models in initialize_ucvm() would need to be changed.

- We want to support new kinds of velocity values.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/HFSim_mem/src/retrieve_vs.c

Author: Scott Callaghan

Dependencies: UCVM

Executable chain:

retrieve_vs

Compile instructions:Run 'make retrieve_vs'

Usage:

Usage: ./retrieve_vs <lon> <lat> <model> <gridspacing> <out filename>

Typical run configuration: Serial; takes about 15 seconds.

Input files: None

Output files: Velocity Info file

Local VM

Purpose: To generate a "local" 1D velocity file, required for the high-frequency codes.

Detailed description: Local VM takes in an input file containing a 1D velocity model. It then calculates Qs from these values and writes all the velocity data to a new file. For all Study 15.12 runs, we used the LA Basin 1D model from the BBP, v14.3.0. It's registered in the RLS, and is located at /home/scec-02/cybershk/runs/genslip_nr_generic1d-gp01.vmod.

Needs to be changed if:

- We change the algorithm for calculating Vs.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/HFSim_mem/gen_local_vel.py

Author: Scott Callaghan, modified from Rob Graves' code

Dependencies: None

Executable chain:

gen_local_vel.py

Compile instructions: None

Usage:

Usage: ./gen_local_vel.py <1D velocity model> <output>

Typical run configuration: Serial; takes less than a second.

Input files: BBP 1D velocity file

Output files: Local VM file

Create Dirs

Purpose: To create a directory for each source.

Detailed description: The high-frequency codes produce many intermediate files. To avoid overloading the filesystem, Create Dirs creates a separate directory for every source.

Needs to be changed if: This code is basically just a wrapper around mkdir, and is unlikely to need changes.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/HFSim_mem/create_dirs.py

Author: Scott Callaghan

Dependencies: None

Executable chain:

create_dirs.py

Compile instructions: None

Usage:

Usage: ./create_dirs.py <file with list of dirs>

Typical run configuration: Serial, takes just a few seconds.

Input files: File with a directory to create (a source ID) on each line.

Output files: None.

HF Synth

Purpose: HF Synth generates a high-frequency stochastic seismogram for one or more rupture variations.

Detailed description: This code wraps multiple broadband platform codes to reduce the number of invocations required. Specifically, it calls:

- srf2stoch_lite(), a reduced-memory version of srf2stoch. We have modified it to call rupgen_genslip() to generate the SRF, rather than reading it in from disk.

- hfsim(), a wrapper for:

- hb_high(), Rob Graves's original BBP code to produce the seismograms

- wcc_getpeak(), which calculates PGA for the seismogram

- wcc_siteamp14(), which performs site amplification.

Vs30 is required, so if it is not passed as a command-line argument, UCVM is called to determine it.

Additionally, hf_synth_lite is able to handle processing on multiple rupture variations, to further reduce the number of invocations.

Needs to be changed if:

- A new version of one of Rob's codes - the high-frequency generator or the site amplification - is needed. We have tried to use whatever the most recent version is on the BBP, for consistency.

- New velocity parameters are needed for the site amplification.

- The format of the rupture geometry files changes.

The makefile needs to be changed if the path to libmemcached, UCVM, Getpar, or the rupture generator changes.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/HFSim_mem

Author: wrapper by Scott Callaghan, hb_high(), wcc_getpeak(), and wcc_siteamp14() by Rob Graves

Dependencies: Getpar, UCVM, rupture generator, libmemcached

Executable chain:

hf_synth_lite

Compile instructions: Run 'make' in src.

Usage: There is no 'help' usage string, but here's a sample invocation:

/projects/sciteam/jmz/CyberShake/software/HFSim_mem/bin/hf_synth_lite stat=OSI slat=34.6145 slon=-118.7235 rup_geom_file=e36_rv6_121_0.txt source_id=121 rupture_id=0 num_rup_vars=5 rup_vars=(0,0,0);(1,1,0);(2,2,0);(3,3,0);(4,4,0) outfile=121/Seismogram_OSI_4331_121_0_hf_t0.grm dx=2.0 dy=2.0 tlen=300.0 dt=0.025 do_site_response=1 vs30=359.1 debug=0 vmod=LA_Basin_BBP_14.3.0.local

Typical run configuration: Serial; takes a few seconds per rupture variation up to a minute, depending on the size of the fault surface.

Input files: Local velocity file, rupture geometry file

Output files: High-frequency seismograms, in the general seismogram format.

Combine HF Synth

Purpose: This code combines the seismograms produced by HF Synth so that there is just 1 seismogram per source/rupture combo.

Detailed description: Since we split up work so that each HF Synth job takes a chunk of rupture variations, we may end up with multiple seismogram files per rupture, each containing some of the rupture variations. This script concatenates the files, using cat, into a single file, ready to be worked on later in the workflow.

Needs to be changed if: I can't think of a circumstance where we would need to change this.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/HFSim_mem/combine_seis.py

Author: Scott Callaghan

Dependencies: None

Executable chain:

combine_seis.py cat

Compile instructions: None

Usage:

Usage: ./combine_seis.py <seis 0> <seis 1> ... <seis N> <output seis name>

Typical run configuration: Serial; takes a few seconds.

Input files: High-frequency seismograms, in the general seismogram format.

Output files: A single high-frequency seismogram, in the general seismogram format.

LF Site Response

Purpose: This code performs site response modifications to the CyberShake low-frequency seismograms.

Detailed description: The LF Site Response code takes a low-frequency seismogram and some velocity parameters, and outputs a seismogram with site response applied. In Study 15.12, this was a necessary step before combining the low and high frequency seismograms together. Since Vs30 is required, if it's not passed as a command-line argument, then UCVM is called to determine it.

The reason we calculate site response for the low-frequency deterministic seismograms is that we want both the low- and high-frequency results to be for the same site-response condition. For the HF, we used Vs30 directly for the site-response adjustment, but for the LF we had to use an adjusted VsX value since the grid spacing was 100 m (so Vs30 doesn't make sense).

Needs to be changed if:

- We change the site response algorithm.

- We decide to use different velocity parameters for setting site response.

- The format of the seismogram files changes.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/LF_Site_Response

Author: wrapper by Scott Callaghan, site response by Rob Graves

Dependencies: Getpar, UCVM, rupture generator, libmemcached

Executable chain:

lf_site_response

Compile instructions: Edit the makefile to point to RupGen, libmemcached, and Getpar, then run 'make' in the src directory.

Usage: Sample invocation:

./lf_site_response seis_in=Seismogram_OSI_3923_263_3.grm seis_out=263/Seismogram_OSI_3923_263_3_site_response.grm slat=34.6145 slon=-118.7235 module=cb2014 vs30=359.1 vref=344.7

Typical run configuration: Serial; takes less than a second.

Input files: A low-frequency deterministic seismogram, in the general seismogram format.

Output files: A low-frequency deterministic seismogram with site response, in the general seismogram format.

Merge IM

Purpose: This code combines low-frequency deterministic and high-frequency stochastic seismograms, then processes them to obtain intensity measures.

Detailed description: The Merge IM code takes an LF and HF seismogram and performs the following processing:

- A high-pass filter is applied to the HF seismogram.

- The LF seismogram is resampled to the same dt as the HF seismogram.

- The two seismograms are combined into a single broadband (BB) seismogram.

- The PSA code is run on the resulting seismogram.

- If desired, the RotD and duration codes are also run on the seismogram.

Merge IM works on a seismogram file at the rupture level, so it assumes that the input files contain multiple rupture variations.

Needs to be changed if:

- We change the filter-and-combine algorithm.

- We decide to modify the post-processing and IM types we want to capture.

- The format of the seismogram files changes.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/MergeIM

Author: Scott Callaghan, Rob Graves

Dependencies: Getpar

Executable chain:

merge_psa

Compile instructions: Edit the makefile to point to Getpar, then run 'make' in the src directory.

Usage: Sample invocation:

./merge_psa lf_seis=182/Seismogram_OSI_3923_182_23_site_response.grm hf_seis=182/Seismogram_OSI_4331_182_23_hf.grm seis_out=182/Seismogram_OSI_4331_182_23_bb.grm freq=1.0 comps=2 num_rup_vars=16 simulation_out_pointsX=2 simulation_out_pointsY=1 simulation_out_timesamples=12000 simulation_out_timeskip=0.025 surfseis_rspectra_seismogram_units=cmpersec surfseis_rspectra_output_units=cmpersec2 surfseis_rspectra_output_type=aa surfseis_rspectra_period=all surfseis_rspectra_apply_filter_highHZ=20.0 surfseis_rspectra_apply_byteswap=no out=182/PeakVals_OSI_4331_182_23_bb.bsa run_rotd=1 rotd_out=182/RotD_OSI_4331_182_23_bb.rotd run_duration=1 duration_out=182/Duration_OSI_4331_182_23_bb.dur

Typical run configuration: Serial; takes 5-30 seconds, depending on the number of rupture variations in the files.

Input files: LF deterministic seismogram and HF stochastic seismogram, in the general seismogram format.

Output files: BB seismogram, in the general seismogram format; also PSA files, RotD files, and Duration files

File types

Modelbox

Purpose: Contains a description of the simulation box, at the surface.

Filename convention: <site>.modelbox

Format:

<site name> APPROXIMATE CENTROID: clon= <centroid lon> clat =<centroid lat> MODEL PARAMETERS: mlon= <model lon> mlat =<model lat> mrot=<model rot, default -55> xlen= <x-length in km> ylen= <y-length in km> MODEL CORNERS: <lon 1> <lat 1> (x= 0.000 y= 0.000) <lon 2> <lat 2> (x= <max x> y= 0.000) <lon 3> <lat 3> (x= <max x> y= <max y>) <lon 4> <lat 4> (x= 0.000 y= <max y>)

Generated by: PreCVM

Used by: PreSGT, PostAWP

Gridfile

Purpose: Specify the three dimensions, and gridspacing in each dimension, of the volume.

Filename convention: gridfile_<site>

Format:

xlen=<x-length in km> 0.0 <x-length> <grid spacing in km> ylen=<y-length in km> 0.0 <y-length> <grid spacing in km> zlen=<z-length in km> 0.0 <z-length> <grid spacing in km>

Gridout

Purpose: Specify the km offsets for each grid index, in X, Y, and Z, from the upper southwest corner.

Filename convention: gridout_<site>

Format:

xlen=<x-length in km> nx=<number of gridpoints in X direction> 0 0 <grid spacing> 1 <grid spacing> <grid spacing> 2 <2*grid spacing> <grid spacing> 3 <3*grid spacing> <grid spacing> ... nx-1 <(nx-1)*grid spacing> <grid spacing> ylen=<y-length in km> ny=<number of gridpoints in Y direction> 0 0 <grid spacing> 1 <grid spacing> <grid spacing> ... ny-1 <(ny-1)*grid spacing> <grid spacing> zlen=<z-length in km> nz=<number of gridpoints in Z direction> 0 0 <grid spacing> 1 <grid spacing> <grid spacing> ... nz-1 <(nz-1)*grid spacing> <grid spacing>

Generated by: PreCVM

Used by: UCVM, smoothing, PreSGT, PreAWP

Params

Purpose: Succinctly specify the parameters for the CyberShake volume. Similar information to the modelbox file, but in a different format.

Filename convention: model_params_GC_<site> (GC stands for 'great circle', the projection we use).

Format:

Model origin coordinates: lon= <model lon> lat= <model lat> rotate= <model rotation, default -55> Model origin shift (cartesian vs. geographic): xshift(km)= <x shift, usually half the x-length minus 1 grid spacing> yshift(km)= <y-shift, usually half the y-length minus 1 grid spacing> Model corners: c1= <nw lon> <nw lat> c2= <ne lon> <ne lat> c3= <se lon> <se lat> c4= <sw lon> <sw lat> Model Dimensions: xlen= <x-length> km ylen= <y-length> km zlen= <z-length> km

Generated by: PreCVM

Used by:

Coord

Purpose: Specify the mapping of latitude and longitude to X and Y offsets, for each point on the surface.

Filename convention: model_coords_GC_<site> (GC stands for 'great circle', the projection we use).

Format:

<lon> <lat> 0 0 <lon> <lat> 1 0 <lon> <lat> 2 0 ... <lon> <lat> <nx-1> 0 <lon> <lat> 0 1 ... <lon> <lat> <nx-1> 1 ... <lon> <lat> <nx-1> <ny-1>

Generated by: PreCVM

Used by: UCVM, smoothing, PreSGT

Bounds

Purpose: Specify the mapping of latitude and longitude to X and Y offsets, but only for the points along the boundary. A subset of the coord file.

Filename convention: model_bounds_GC_<site> (GC stands for 'great circle', the projection we use).

Format:

<lon> <lat> 0 0 <lon> <lat> 1 0 <lon> <lat> 2 0 ... <lon> <lat> <nx-1> 0 <lon> <lat> 0 1 <lon> <lat> <nx-1> 1 <lon> <lat> 0 2 <lon> <lat> <nx-1> 2 ... <lon> <lat> 0 <ny-1> <lon> <lat> 1 <ny-1> ... <lon> <lat> <nx-1> <ny-1>

Generated by: PreCVM

Used by:

Velocity files

RWG format

Purpose: Input velocity files for the RWG wave propagation code, emod3d.

Filename convention: v_sgt-<site>.<p, s, or d>

Format: 3 files, one each for Vp (*.p), Vs (*.s), and rho (*.d). Each is binary, with 4-byte floats, in fast X, Z (surface->down), slow Y order.

Generated by: UCVM

Used by: PreAWP

AWP format

Purpose: Input velocity file for the AWP-ODC wave propagation code.

Filename convention: awp.<site>.media

Format: Binary, with 4-byte floats, in fast Y, X, slow Z (surface down) order.

Generated by: UCVM

Used by: Smoothing, PreAWP, PostAWP

Fdloc

Purpose: Coordinates of the site, in X Y grid indices, and therefore the coordinates where the SGT impulse should be placed.

Filename convention: <site>.fdloc

Format:

<X grid index of site> <Y grid index of site>

Generated by: PreSGT

Used by: PreAWP, PostAWP

Faultlist

Purpose: List of paths to all the rupture geometry files for all ruptures which are within the cutoff for this site. Used to produce a list of points to save SGTs for.

Filename convention: <site>.faultlist

Format:

<path to rupture file> nheader=<number of header lines, usually 6> latfirst=<1, to signify that latitude comes first in the rupture files> ...

Generated by: PreSGT

Used by: PreSGT

Radiusfile

Purpose: Describe the adaptive mesh SGTs will be saved for.

Filename convention: <site>.radiusfile

Format:

<number of gradations in X and Y> <radius 1> <radius 2> <radius 3> <radius 4> <decimation less than radius 1> <decimation between radius 1 and 2> <between 2 and 3> <between 3 and 4> <number of gradations in Z> <depth 1> <depth 2> <depth 3> <depth 4> <decimation less than depth 1> <decimation between depth 1 and 2> <between 2 and 3> <between 3 and 4>

Generated by: PreSGT

Used by: PreSGT

SGT Coordinate files

There are two formats for the list of points to save SGTs for, one for Rob's codes and one for AWP-ODC. As with other coordinate transformations between the two systems, to convert X and Y offsets from RWG to AWP you have to flip the X and Y and add 1 to each, since RWG is 0-indexed and AWP is 1-indexed.

SgtCoords

Purpose: List of all the points to save SGTs for.

Filename convention: <site>.cordfile

Format: Z changes fastest, then Y, then X slowest.

# geoproj= <projection; we usually use 1 for great circle> # modellon= <model lon> modellat= <model lat> modelrot= <model rot, usually -55> # xlen= <x-length> ylen= <y-length> # <total number of points> <X index> <Y index> <Z index> <Single long to capture the index, in the form XXXXYYYYZZZZ> <lon> <lat> <depth in km> ...

Generated by: PreSGT

Used by: PreSGT, PreAWP, PostAWP

AWP cordfile

Purpose: List of SGT points to save in a format usable by AWP-ODC-SGT.

Filename convention: awp.<site>.cordfile

Format: Remember that X and Y are flipped and have 1 added from RWG. The points are sorted by Y, then X, then Z, so Y changes slowest and Z changes fastest. This is flipped from the RWG cordfile because X and Y components are swapped.

<number of points> <X coordinate> <Y coordinate> <Z coordinate> ...

Generated by: PreAWP

Used by: AWP-ODC-SGT CPU, AWP-ODC-SGT GPU

Impulse source descriptions

We generate the initial source description for CyberShake, with the required dt, nt, and filtering, using gen_source, in http://source.usc.edu/svn/cybershake/import/trunk/SimSgt_V3.0.3/src/ (run 'make get_source'). gen_source hard-codes its parameters, but you should only change 'nt', 'dt', and 'flo'. We have been setting flo to twice the CyberShake maximum frequency, to reduce filtering affects at the frequency of interest. gen_source wraps Rob Graves's source generator, which we use for consistency.

Once this RWG source is generated, we then use AWP-GPU-SGT/utils/data/format_source.py to reprocess the RWG source into an AWP-source friendly format. This involves reformatting the file and multiplying all values by 1e15 for unit conversion. Different files must be produced for X and Y coordinates, since in the AWP format different columns are used for different components.

Finally, AWP-GPU-SGT/utils/build_src.py takes the correct AWP-friendly source (nt and dt) for a run and adds the impulse location coordinates, producing a complete AWP format source description.

RWG source

Purpose: Source description for the SGT impulse.

Filename convention: source_cos0.10_<frequency>hz

Format:

source cos <nt> <dt> 0 0 0.0 0.0 0.0 0.0 <value at ts0> <value at ts1> <value at ts2> <value at ts3> <value at ts4> <value at ts5> <value at ts6> <value at ts7> <value at ts8> <value at ts9> <value at ts10> <value at ts11> ...

Generated by: gen_source (see above)

Used by: PreAWP

AWP source

Purpose: Source description which can be used by AWP-ODC.

Filename convention: <site>_f<x or y>_src

Format: Note that X and Y coordinates are swapped between RWG and AWP format, because of how the box is defined. Additionally, RWG is 0-indexed, and AWP is 1-indexed, and the RWG values must be multiplied by 1e15 for unit conversion.

<X index of source, same as site X index> <Y index of source, same as site Y index> <XX impulse at ts0> <YY at ts0> <ZZ at ts0> <XY at ts0> <XZ at ts0> <YZ at ts0> ...

Generated by: PreAWP

Used by: AWP-ODC-SGT CPU, AWP-ODC-SGT GPU

IN3D

Purpose: Input file for AWP-ODC.

Filename convention: IN3D.<site>.<x or y>

Format: Specified here (login required).

Generated by: PreAWP

Used by: AWP-ODC-SGT CPU, AWP-ODC-SGT GPU, PostAWP

AWP SGT

Purpose: SGT file, created by AWP-ODC-SGT.

Filename convention: awp-strain-<site>-f<x or y>

Format: binary, 4-byte floats. Points are in the same order as in the AWP SGT coordinate file, which is fast Z, X, Y. For each point, the SGT components are stored in XX, YY, ZZ, XY, XZ, YZ order, with time fastest.

<timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 1st z-coordinate), XX component> <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 1st z-coordinate), YY component> ... <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 1st z-coordinate), YZ component> <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 2nd z-coordinate), XX component> ... <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, last z-coordinate), YZ component> <timeseries for nt steps, for (2nd x-coordinate, 1st y-coordinate, 1st z-coordinate), XX component> ... <timeseries for nt steps, for (last x-coordinate, 1st y-coordinate, last z-coordinate), YZ component> <timeseries for nt steps, for (1st x-coordinate, 2nd y-coordinate, 1st z-coordinate), XX component> ... <timeseries for nt steps, for (last x-coordinate, last y-coordinate, last z-coordinate), YZ component>

Generated by: AWP-ODC-SGT CPU and GPU

Used by: PostAWP, NanCheck

RWG SGT

Purpose: SGT file, created by PostAWP for use in post-processing.

Filename convention: <site>_f<x or y>_<run id>.sgt

Format: binary, 4-byte floats. Points are in the same order as in the RWG coordinate file, which is fast Z, Y, X. For each point, the SGT components are stored in XX, YY, ZZ, XY, XZ, YZ order, with time fastest.

<timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 1st z-coordinate), XX component> <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 1st z-coordinate), YY component> ... <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 1st z-coordinate), YZ component> <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, 2nd z-coordinate), XX component> ... <timeseries for nt steps, for (1st x-coordinate, 1st y-coordinate, last z-coordinate), YZ component> <timeseries for nt steps, for (2nd x-coordinate, 1st y-coordinate, 1st z-coordinate), XX component> ... <timeseries for nt steps, for (last x-coordinate, 1st y-coordinate, last z-coordinate), YZ component> <timeseries for nt steps, for (1st x-coordinate, 2nd y-coordinate, 1st z-coordinate), XX component> ... <timeseries for nt steps, for (last x-coordinate, last y-coordinate, last z-coordinate), YZ component>

Generated by: PostAWP

Used by: CheckSgt, DirectSynth

SGT header file

Purpose: SGT header information, used to parse and understand SGT files

Filename convention: <site>_f<x or y>_<run id>.sgthead

Format: binary. It consists of three sections:

- The sgtmaster structure, described below in C. Its information can be used to set up data structures to read the rest of the SGTs.

- The sgtindex structures, described below in C. There is one of these for each point in the SGTs, and they're used to determine the X/Y/Z indices of all the SGT points. Note that the current way of packing the X,Y,Z coordinates into the long allows for 6 digits (so maximum 1M grid points) for each component.

- The sgtheader structures, described below in C. There is one of these for each point in the SGTs. They're used when we perform reciprocity.

struct sgtmaster

{

int geoproj; /* =0: RWG local flat earth; =1: RWG great circle arcs; =2: UTM */

float modellon; /* longitude of geographic origin */

float modellat; /* latitude of geographic origin */

float modelrot; /* rotation of y-axis from south (clockwise positive) */

float xshift; /* xshift of cartesian origin from geographic origin */

float yshift; /* yshift of cartesian origin from geographic origin */

int globnp; /* total number of SGT locations (entire model) */

int localnp; /* local number of SGT locations (this file only) */

int nt; /* number of time points */

};

struct sgtindex /* indices for all 'globnp' SGT locations */

{

long long indx; /* indx= xsgt*1000000000000 + ysgt*1000000 + zsgt */

int xsgt; /* x grid location */

int ysgt; /* y grid location */

int zsgt; /* z grid location */

float h; /* grid spacing */

};

struct sgtheader

{

long long indx; /* index of this SGT */

int geoproj; /* =0: RWG local flat earth; =1: RWG great circle arcs; =2: UTM */

float modellon; /* longitude of geographic origin */

float modellat; /* latitude of geographic origin */

float modelrot; /* rotation of y-axis from south (clockwise positive) */

float xshift; /* xshift of cartesian origin from geographic origin */

float yshift; /* yshift of cartesian origin from geographic origin */

int nt; /* number of time points */

float xazim; /* azimuth of X-axis in FD model (clockwise from north) */

float dt; /* time sampling */

float tst; /* start time of 1st point in GF */

float h; /* grid spacing */

float src_lat; /* site latitude */

float src_lon; /* site longitude */

float src_dep; /* site depth */

int xsrc; /* x grid location for source (station in recip. exp.) */

int ysrc; /* y grid location for source (station in recip. exp.) */

int zsrc; /* z grid location for source (station in recip. exp.) */

float sgt_lat; /* SGT location latitude */

float sgt_lon; /* SGT location longitude */

float sgt_dep; /* SGT location depth */

int xsgt; /* x grid location for output (source in recip. exp.) */

int ysgt; /* y grid location for output (source in recip. exp.) */

int zsgt; /* z grid location for output (source in recip. exp.) */

float cdist; /* straight-line distance btw site and SGT location */

float lam; /* lambda [in dyne/(cm*cm)] at output point */

float mu; /* rigidity [in dyne/(cm*cm)] at output point */

float rho; /* density [in gm/(cm*cm*cm)] at output point */

float xmom; /* moment strength of x-oriented force in this run */

float ymom; /* moment strength of y-oriented force in this run */

float zmom; /* moment strength of z-oriented force in this run */

};

Overall, then, the format for the file is:

<sgtmaster> <sgtindex for point 1> <sgtindex for point 2> ... <sgtindex for point globnp> <sgtheader for point 1> <sgtheader for point 2> ... <sgtheader for point globnp>

Generated by: PostAWP

Used by: DirectSynth

Velocity Info file

Purpose: Contains the 3D velocity information needed for stochastic jobs

Filename convention: velocity_info_<site>.txt

Format: Text format, three lines:

Vs30 = <Vs30 value> Vs500 = <Vs500 value> VsD500 = <VsD500 value>

Generated by: Velocity Info job

Used by: Sub Stoch DAX generator, to add these values as command-line arguments to HF Synth and LF Site Response jobs.

BBP 1D Velocity file

Purpose: Contains 1D velocity profile information

Filename convention: The only one currently in use in CyberShake is /home/scec-02/cybershk/runs/genslip_nr_generic1d-gp01.vmod .

Format: Text format:

<number of thickness layers L> <layer 1 thickness in km> <Vp> <Vs> <density> <not used> <not used> <layer 2 thickness in km> <Vp> <Vs> <density> <not used> <not used> ... <layer L thickness in km> <Vp> <Vs> <density> <not used> <not used>

Note that the last layer has thickness 999.0.

Generated by: Rob Graves

Used by: Local VM job

Local VM file

Purpose: Contains 1D velocity profile information for use with stochastic codes

Filename convention: The only one currently in use in CyberShake is LA_Basin_BBP_14.3.0.local .

Format: Text format:

<number of thickness layers L> <layer 1 thickness in km> <Vp> <Vs> <density> <Qs> <Qs> <layer 2 thickness in km> <Vp> <Vs> <density> <Qs> <Qs> ... <layer L thickness in km> <Vp> <Vs> <density> <Qs> <Qs>

Note that the last layer has thickness '0.0', indicating it has no bottom.

Generated by: Local VM Job

Used by:

Missing variations file

Purpose: Lists the variations which the Check DB stage has found are missing.

Filename convention: DB_Check_Out_<PSA or RotD or Duration>_<site>

Format: For each source and rupture pair with missing variations, the following record is output in text format:

<source ID> <rupture ID> <number N of missing rupture variations> <ID of first missing rupture variation> <ID of second missing rupture variation> ... <ID of Nth missing rupture variation>

Originally, a file in this format could be directly fed back into the DAX generator, but that capability has not been used for many years and may not still be functional.

Generated by: Check DB Site

Used by: none

DB Report file

Purpose: Provides PSA data for a run in a text format.

Filename convention: <site>_ERF<erf id>_report_<date>.txt

Format: It's a text file with the following header:

Site_Name ERF_ID Source_ID Rupture_ID Rup_Var_ID Rup_Var_Scenario_ID Mag Prob Grid_Spacing Num_Rows Num_Columns Period Component SA

The file is sorted by fast Rup_Var_ID, Rupture_ID, Source_ID, Period, slow Component.

Generated by: DB Report

Used by: none, output data product

Hazard Curve

Purpose: Contains a hazard curve, either in text, PNG, or PDF format.

Filename convention: <site>_ERF<erf id>_Run<run id>_<IM type>_<period>sec_<IM component>_<date run completed>.<pdf|txt|png>

Format: The PNG and PDF formats contain an image of the curve. The PDF format also has an extended legend. The TXT file contains a list of (X,Y) points which describe the curve.

Generated by: Curve Calc

Used by: none, output data product

Disaggregation file

Purpose: Contains disaggregation results for a single run, in either text, PNG, or PDF format.

Filename convention: <site>_ERF<erf id>_Run<run_id>_Disagg<POE|IM>_<disagg level>_<IM type>_<period>sec_<run date>.<txt|png|pdf>

Format: The PNG and PDF formats contain a plot of the disaggregation results, showing magnitude vs distance and color-coding based on epsilon. The PDF and TXT formats contain additional information about individual source contributions, in the following format:

Summary data Parameters used to create disaggregation Disaggregation bin data: Dist Mag <breakout by epsilon values> <Breakdown of contribution by distance, magnitude, and epsilon range> Disaggregation Source List Info: Source# %Contribution TotExceedRate SourceName DistRup DistX DistSeis DistJB <list of contributing sources, in decreasing order of % contribution>

Generated by: Disaggregation

Used by: none, output data product

Dependencies

The following are external software dependencies used by CyberShake software modules.

Getpar

Purpose: A library written in C which enables parsing of key-value command-line parameters, and enforcement of required parameters. Rob Graves uses it in his codes.

How to obtain: Rob supplied a copy; it is in the CyberShake repository at http://source.usc.edu/svn/cybershake/import/trunk/Getpar/ .

Special installation instructions: Run 'make' in Getpar/getpar/src; this will make the library, libget.a, and install it in the lib directory, where CyberShake codes will expect it.

MySQLdb

Purpose: MySQL bindings for Python 2.

How to obtain: https://sourceforge.net/projects/mysql-python/ . Documentation is at http://mysql-python.sourceforge.net/MySQLdb.html .