Difference between revisions of "CyberShake Workflow Framework"

(→Create) |

(→Create) |

||

| Line 73: | Line 73: | ||

<b>Purpose:</b> To create the DAX files for an SGT-only workflow. | <b>Purpose:</b> To create the DAX files for an SGT-only workflow. | ||

| − | <b>Detailed description:</b> create_sgt_dax.sh takes the command-line arguments and determines a Run ID to assign to the run. | + | <b>Detailed description:</b> create_sgt_dax.sh takes the command-line arguments and, by querying the database determines a Run ID to assign to the run. The entry corresponding to this run in the database is either updated or created. This run ID is then added to the file <site short name>_SGT_dax/run_table.txt. It then compiles the DAX Generator (in case any changes have been made) and runs it with the correct command-line arguments, creating the DAXes. Next, a directory, <site short name>_SGT_dax/run_<run id>, is created, and the DAX files are moved into that directory. Finally, the run in the database is edited to reflect that creation is complete. |

<b>Needs to be changed if:</b> | <b>Needs to be changed if:</b> | ||

| − | #The | + | #New velocity models are added. The wrapper encodes a mapping between a string and the velocity model ID in lines 129-152, and a new entry must be added for a new model. |

| − | # | + | #New science parameters need to be tracked. Currently the site name, ERF id, SGT variation id, rupture variation scenario id, velocity model id, frequency, and source frequency are used. If new parameters are important, they would need to be captured, included in the run_table file, and passed to the Run Manager (which would also need to be edited to include it). |

| − | + | #Arguments to the DAX Generator change. | |

| − | |||

| − | # | ||

| − | <b>Source code location:</b> http://source.usc.edu/svn/cybershake/import/trunk/ | + | <b>Source code location:</b> http://source.usc.edu/svn/cybershake/import/trunk/scripts/create_sgt_dax.sh |

| − | <b>Author:</b> | + | <b>Author:</b> Scott Callaghan |

| − | <b>Dependencies:</b> [[ | + | <b>Dependencies:</b> [[CyberShake_Workflow_Framework#RunManager|Run Manager]] |

<b>Executable chain:</b> | <b>Executable chain:</b> | ||

| − | + | create_sgt_dax.sh | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | <b>Compile instructions:</b> | + | <b>Compile instructions:</b>None |

<b>Usage:</b> | <b>Usage:</b> | ||

| − | <pre>Usage: | + | <pre>Usage: ./create_sgt_dax.sh [-h] <-v VELOCITY_MODEL> <-e ERF_ID> <-r RV_ID> <-g SGT_ID> <-f FREQ> <-s SITE> [-q SRC_FREQ] [SGT dax generator args] |

| − | + | -h display this help and exit | |

| − | + | -v VELOCITY_MODEL select velocity model, one of v4 (CVM-S4), vh (CVM-H), vsi (CVM-S4.26), vs1 (SCEC 1D model), vhng (CVM-H, no GTL), or vbbp (BBP 1D model). | |

| − | + | -e ERF_ID ERF ID | |

| − | + | -r RUP_VAR_ID Rupture Variation ID | |

| − | + | -g SGT_ID SGT ID | |

| − | + | -f FREQ Simulation frequency (0.5 or 1.0 supported) | |

| − | + | -q SRC_FREQ Optional: SGT source filter frequency | |

| − | + | -s SITE Site short name | |

| − | + | ||

| − | + | Can be followed by optional SGT arguments: | |

| − | + | usage: CyberShake_SGT_DAXGen <output filename> <destination directory> | |

| − | + | [options] [-f <runID file, one per line> | -r <runID1> <runID2> ... ] | |

| − | + | -d,--handoff Run handoff job, which puts SGT into pending file | |

| − | + | on shock when completed. | |

| − | + | -f <runID_file> File containing list of Run IDs to use. | |

| − | + | -h,--help Print help for CyberShake_SGT_DAXGen | |

| − | + | -mc <max_cores> Maximum number of cores to use for AWP SGT code. | |

| − | + | -mv <minvs> Override the minimum Vs value | |

| − | + | -ns,--no-smoothing Turn off smoothing (default is to smooth) | |

| + | -r <runID_list> List of Run IDs to use. | ||

| + | -sm,--separate-md5 Run md5 jobs separately from PostAWP jobs | ||

| + | (default is to combine). | ||

| + | -sp <spacing> Override the default grid spacing, in km. | ||

| + | -sr,--server <server> Server to use for site parameters and to insert | ||

| + | PSA values into | ||

| + | -ss <sgt_site> Site to run SGT workflows on (optional) | ||

| + | -sv,--split-velocity Use separate velocity generation and merge jobs | ||

| + | (default is to use combined job) | ||

| + | </pre> | ||

| − | <b> | + | <b>Input files:</b> None |

| − | <b> | + | <b>Output files:</b> DAX files (schema is defined here: https://pegasus.isi.edu/documentation/schemas/dax-3.6/dax-3.6.html) |

| − | |||

*SGT workflow only: create_sgt_dax.sh | *SGT workflow only: create_sgt_dax.sh | ||

Revision as of 21:56, 13 February 2018

This page provides documentation on the workflow framework that we use to execute CyberShake.

As of 2018, we use shock.usc.edu as our workflow submit host for CyberShake, but this setup could be replicated on any machine.

Contents

Overview

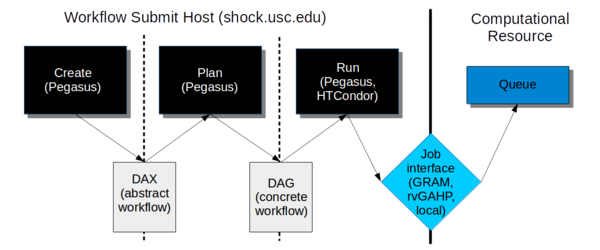

The workflow framework consists of three phases:

- Create. In this phase, we use the Pegasus API to create an "abstract description" of the workflow, so named because it does not have the specific paths or runtime configuration information for any system. It is a general description of the tasks to execute in the workflow, the input and output files, and the dependencies between them. This description is called a DAX and is in XML format.

- Plan. In this phase, we use the Pegasus planner to take our abstract description and convert it into a "concrete description" for execution on one or more specific computational resources. At this stage, paths to executables and specific configuration information, such as the correct certificate to use, what account to charge, etc. are added into the workflow. This description is called a DAG and consists of multiple files in a format expected by HTCondor.

- Run. In this phase, we use Pegasus to hand our DAG off to HTCondor, which supervises the execution of the workflow. HTCondor maintains a queue of jobs. Jobs with all their dependencies satisfied are eligible to run and are sent to their execution system.

We will go into each phase in more detail below.

Software Dependencies

Before attempting to create and execute a workflow, the following software dependencies must be satisfied.

Workflow Submit Host

In addition to Java 1.7+, we require the following software packages on the workflow submission host:

Pegasus-WMS

Purpose: We use Pegasus to create our workflow description and plan it for execution with HTCondor. Pegasus also provides a number of other features, such as automatically adding transfer jobs, wrapping jobs in kickstart for runtime statistics, using monitord to populate a database with metrics, and registering output files in a catalog.

How to obtain: You can download the latest version (binaries) from https://pegasus.isi.edu/downloads/, or clone the git repository at https://github.com/pegasus-isi/pegasus . Usually we clone the repo, as with some regularity we need new features implemented and tested. We are currently running 4.7.3dev on shock, but you should install the latest version.

Special installation instructions: Follow the instructions to build from source - it's straightforward. On shock, we install Pegasus into /opt/pegasus, and update the default link to point to our preferred version.

HTCondor

Purpose: HTCondor performs the actual execution of the workflow. Part of Condor, DAGMan, maintains a queue and keeps track of job dependencies, submitting jobs to the appropriate resource when it's time for them to run.

How to obtain: HTCondor is available at https://research.cs.wisc.edu/htcondor/downloads/, but installing from a repo is the preferred approach. We are currently running 8.4.8 on shock. You should install the 'Current Stable Release'.

Special installation instructions: We've been asking the system administrator to install it in shared space.

rvGAHP

To enable rvGAHP submission for systems which don't support remote authentication using proxies (currently Titan), you should configure rvGAHP on the submission host as described here: https://github.com/juve/rvgahp .

Remote Resource

Pegasus-WMS

On the remote resource, only the Pegasus-worker packages are technically required, but I recommend installing all of Pegasus anyway. This may also require installing Ant. Typically, we install Pegasus to <CyberShake home>/utils/pegasus.

GRAM submission

To support GRAM submission, the Globus Toolkit (http://toolkit.globus.org/toolkit/) needs to be installed on the remote system. It's already installed at Titan and Blue Waters. Since the toolkit reached end-of-life in January 2018, there is some uncertainty as to what we will move to.

rvGAHP submission

For rvGAHP submission - our approach for systems which do not permit the use of proxies for remote authentication - you should follow the instructions at https://github.com/juve/rvgahp to set up rvGAHP correctly.

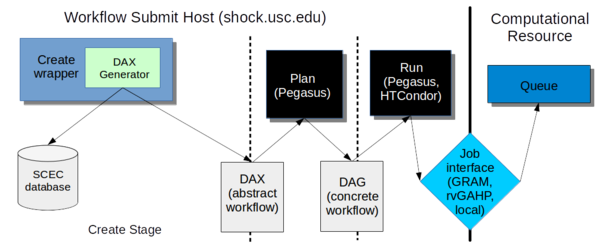

Create

In the creation stage, we invoke a wrapper script which calls the DAX Generator, which creates the DAX file for a CyberShake workflow.

At creation, we decide which workflow we want to run -- SGT only, post-processing only, or both ('integrated'). The reason for this option is that often we want to run the SGT and post-processing workflows in different places. For example, we might want to run the SGT workflow on Titan because of the large number of GPUs, and then the post-processing on Blue Waters because of its XE nodes. If you create an integrated workflow, both the SGT and post-processing parts will run on the same machine. (This is because of the way that we've set up the planning scripts; this could be changed in the future if needed).

Wrapper Script

Four wrapper scripts are available, depending on which kind of workflow you are creating.

create_sgt_dax.sh

Purpose: To create the DAX files for an SGT-only workflow.

Detailed description: create_sgt_dax.sh takes the command-line arguments and, by querying the database determines a Run ID to assign to the run. The entry corresponding to this run in the database is either updated or created. This run ID is then added to the file <site short name>_SGT_dax/run_table.txt. It then compiles the DAX Generator (in case any changes have been made) and runs it with the correct command-line arguments, creating the DAXes. Next, a directory, <site short name>_SGT_dax/run_<run id>, is created, and the DAX files are moved into that directory. Finally, the run in the database is edited to reflect that creation is complete.

Needs to be changed if:

- New velocity models are added. The wrapper encodes a mapping between a string and the velocity model ID in lines 129-152, and a new entry must be added for a new model.

- New science parameters need to be tracked. Currently the site name, ERF id, SGT variation id, rupture variation scenario id, velocity model id, frequency, and source frequency are used. If new parameters are important, they would need to be captured, included in the run_table file, and passed to the Run Manager (which would also need to be edited to include it).

- Arguments to the DAX Generator change.

Source code location: http://source.usc.edu/svn/cybershake/import/trunk/scripts/create_sgt_dax.sh

Author: Scott Callaghan

Dependencies: Run Manager

Executable chain:

create_sgt_dax.sh

Compile instructions:None

Usage:

Usage: ./create_sgt_dax.sh [-h] <-v VELOCITY_MODEL> <-e ERF_ID> <-r RV_ID> <-g SGT_ID> <-f FREQ> <-s SITE> [-q SRC_FREQ] [SGT dax generator args]

-h display this help and exit

-v VELOCITY_MODEL select velocity model, one of v4 (CVM-S4), vh (CVM-H), vsi (CVM-S4.26), vs1 (SCEC 1D model), vhng (CVM-H, no GTL), or vbbp (BBP 1D model).

-e ERF_ID ERF ID

-r RUP_VAR_ID Rupture Variation ID

-g SGT_ID SGT ID

-f FREQ Simulation frequency (0.5 or 1.0 supported)

-q SRC_FREQ Optional: SGT source filter frequency

-s SITE Site short name

Can be followed by optional SGT arguments:

usage: CyberShake_SGT_DAXGen <output filename> <destination directory>

[options] [-f <runID file, one per line> | -r <runID1> <runID2> ... ]

-d,--handoff Run handoff job, which puts SGT into pending file

on shock when completed.

-f <runID_file> File containing list of Run IDs to use.

-h,--help Print help for CyberShake_SGT_DAXGen

-mc <max_cores> Maximum number of cores to use for AWP SGT code.

-mv <minvs> Override the minimum Vs value

-ns,--no-smoothing Turn off smoothing (default is to smooth)

-r <runID_list> List of Run IDs to use.

-sm,--separate-md5 Run md5 jobs separately from PostAWP jobs

(default is to combine).

-sp <spacing> Override the default grid spacing, in km.

-sr,--server <server> Server to use for site parameters and to insert

PSA values into

-ss <sgt_site> Site to run SGT workflows on (optional)

-sv,--split-velocity Use separate velocity generation and merge jobs

(default is to use combined job)

Input files: None

Output files: DAX files (schema is defined here: https://pegasus.isi.edu/documentation/schemas/dax-3.6/dax-3.6.html)

- SGT workflow only: create_sgt_dax.sh

- Post-processing workflow: create_pp_wf.sh

DAX Generator

The DAX Generator consists of multiple complex Java classes. It supports a large number of arguments, enabling the user to select the CyberShake science parameters (site, erf, velocity model, rupture variation scenario id, frequency, spacing, minimum vs cutoff) and the technical parameters (which post-processing code to select, which SGT code, etc.) to perform the run with. Different entry classes support creating just an SGT workflow, just a post-processing (+ data product creation) workflow, or both combined into an integrated workflow.

A detailed overview of the DAX Generator is available here: CyberShake DAX Generator.