Difference between revisions of "CyberShake Study 15.4"

| Line 98: | Line 98: | ||

=== SGT codes === | === SGT codes === | ||

| + | |||

| + | |||

=== PP codes === | === PP codes === | ||

| + | |||

| + | * We have switched from using extract_sgt for the SGT extraction and SeisPSA for the seismogram synthesis to [[DirectSynth]], a code which reads in the SGTs across multiple cores and then uses MPI to send them directly to workers, which perform the seismogram synthesis. We anticipate this code will give us an efficiency improvement of at least 50% over the old approach, since it does not require the writing and reading of the extracted SGT files. | ||

=== Workflow management === | === Workflow management === | ||

Revision as of 21:22, 9 March 2015

CyberShake Study 15.3 is a computational study to calculate one physics-based probabilistic seismic hazard model for Southern California at 1 Hz, using CVM-S4.26, the GPU implementation of AWP-ODC-SGT, the Graves and Pitarka (2015) rupture variations with uniform hypocenters, and the UCERF2 ERF. The SGT calculations will be split between NCSA Blue Waters and OLCF Titan, and the post-processing will be done entirely on Blue Waters. The goal is to calculate the standard Southern California site list (286 sites) used in previous CyberShake studies so we can produce comparison curves and maps, and data products for the UGMS Committee.

Contents

Preparation for production runs

Check list of Mayssa's concernsUpdate DAX to support separate MD5 sumsAdd MD5 sum job to TCEvaluate topology-aware schedulingGet DirectSynth working at full run scale, verify resultsModify workflow to have md5sums be in parallel- Test of 1 Hz simulation with 2 Hz source - 2/27

Add a third pilot job type to Titan pilots - 2/27- Run test of full 1 Hz SGT workflow on Blue Waters - 3/4

- Add cleanup to workflow and test - 3/4

- Test interface between Titan workflows and Blue Waters workflows - 3/4

Add capability to have files on Blue Waters correctly striped - 3/6- Add restart capability to DirectSynth - 3/6

- File ticket for extended walltime for small jobs on Titan - 3/6

Add DirectSynth to workflow tools - 3/6- Implement and test parallel version of reformat_awp - 3/6

- Simulate curves for 3 sites with final configuration; compare curves and seismograms - 3/11

- File ticket for 90-day purged space at Blue Waters - 3/13

- File ticket for reservation at Blue Waters, along with justification - 3/13

- Follow up on high priority jobs at Titan - 3/13

- Create study description file for Run Manager - 3/13

- Science readiness review - 3/18

- Technical readiness review - 3/18

Computational Status

We are hoping to begin this study in early March.

Data Products

Goals

Science Goals

- Calculate a 1 Hz hazard map of Southern California.

- Produce a contour map at 1 Hz for the UGMS committee.

- Compare the hazard maps at 0.5 Hz and 1 Hz.

Technical Goals

- Show that Titan can be integrated into our CyberShake workflows.

- Demonstrate scalability for 1 Hz calculations.

- Show that we can split the SGT calculations across sites.

Verification

DirectSynth

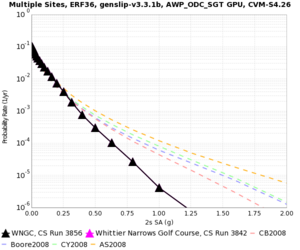

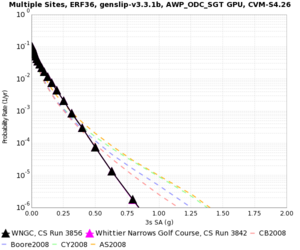

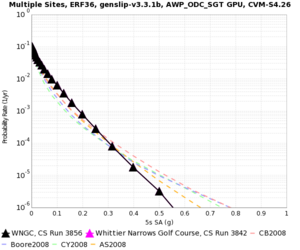

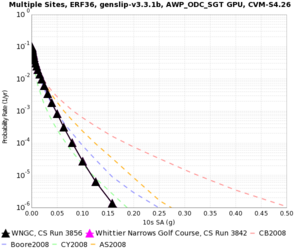

A comparison of 1 Hz results with SeisPSA to results with DirectSynth for WNGC. SeisPSA results are in magenta, DirectSynth results are in black. They're so close it's difficult to make out the magenta.

| 2s | 3s | 5s | 10s |

|---|---|---|---|

2 Hz source

Source comparisons are available at CyberShake Source Filtering.

Blue Waters vs Titan for SGT calculation

Sites

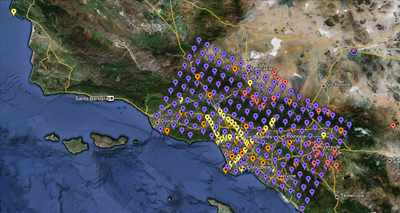

We are proposing to run 286 sites around Southern California. Those sites include 46 points of interest, 27 precarious rock sites, 23 broadband station locations, 43 20 km gridded sites, and 147 10 km gridded sites. All of them fall within the Southern California box except for Diablo Canyon and Pioneer Town. You can get a CSV file listing the sites here. A KML file listing the sites is available here.

Performance Enhancements (over Study 14.2)

Responses to Study 14.2 Lessons Learned

- AWP_ODC_GPU code, under certain situations, produced incorrect filenames.

This was fixed during the Study 14.2 run.

- Incorrect dependency in DAX generator - NanCheckY was a child of AWP_SGTx.

This was fixed during the Study 14.2 run.

- Try out Pegasus cleanup - accidentally blew away running directory using find, and later accidentally deleted about 400 sets of SGTs.

We have added cleanup to the SGT workflow, since that's where most of the extra data is generated, especially with two copies of the SGTs (the ones generated by AWP-ODC-GPU, and then the reformatted ones).

- 50 connections per IP is too many for hpc-login2 gridftp server; brings it down. Try using a dedicated server next time with more aggregated files.

We have moved our USC gridftp transfer endpoint to hpc-scec.usc.edu, which does very little other than GridFTP transfers.

SGT codes

PP codes

- We have switched from using extract_sgt for the SGT extraction and SeisPSA for the seismogram synthesis to DirectSynth, a code which reads in the SGTs across multiple cores and then uses MPI to send them directly to workers, which perform the seismogram synthesis. We anticipate this code will give us an efficiency improvement of at least 50% over the old approach, since it does not require the writing and reading of the extracted SGT files.