Difference between revisions of "CyberShake Study 18.8"

| Line 141: | Line 141: | ||

! 42.2M | ! 42.2M | ||

|} | |} | ||

| + | |||

| + | If, like Study 17.3, we plan to do all the SGT calculations on Titan and split the PP 25% Titan/75% Blue Waters, this would require 52.2M SUs on Titan, and 476K node-hrs on Blue Waters. | ||

Currently we have 91.7M SUs available on Titan (expires 12/31/18), and 8.14M node-hrs on Blue Waters (expires 8/31/18). | Currently we have 91.7M SUs available on Titan (expires 12/31/18), and 8.14M node-hrs on Blue Waters (expires 8/31/18). | ||

| Line 165: | Line 167: | ||

! 11.6 TB | ! 11.6 TB | ||

|} | |} | ||

| + | |||

| + | If we plan on all the SGTs on Titan and split the PP 25% Titan, 75% Blue Waters, we will need: | ||

| + | |||

| + | Titan: 589 TB temp files + 3 TB output files = <b>592 TB</b> | ||

| + | |||

| + | Blue Waters: 162 TB SGTs + 9 TB output files = <b>171 TB</b> | ||

| + | |||

| + | SCEC storage: 1 TB workflow logs + 11.6 TB output data files = <b>12.6 TB</b>(45 TB free) | ||

| + | |||

| + | Database usage: (4 rows PSA [@ 2, 3, 5, 10 sec] + 12 rows RotD [RotD100 and RotD50 @ 2, 3, 4, 5, 7.5, 10 sec])/rupture variation x 225K rupture variations/site x 869 sites = 3.1 billion rows x 125 bytes/row = 364 GB (2.0 TB free on moment.usc.edu disk) | ||

== Production Checklist == | == Production Checklist == | ||

Revision as of 03:49, 2 March 2018

CyberShake 18.3 is a computational study to perform CyberShake in a new region, the extended Bay Area. We plan to use a combination of 3D models (USGS Bay Area detailed and regional, CVM-S4.26.M01, CCA-06) with a minimum Vs of 500 m/s and a frequency of 1 Hz. We will use the GPU implementation of AWP-ODC-SGT, the Graves & Pitarka (2014) rupture variations with 200m spacing and uniform hypocenters, and the UCERF2 ERF. The SGT and post-processing calculations will both be run on both NCSA Blue Waters and OLCF Titan.

Contents

Status

This study is under development. We hope to begin in March 2018.

Science Goals

The science goals for this study are:

- Expand CyberShake to the Bay Area.

- Calculate CyberShake results with the USGS Bay Area velocity model as the primary model.

Technical Goals

- Perform the largest CyberShake study to date.

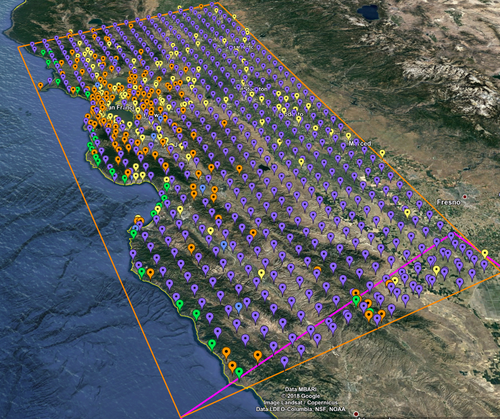

Sites

The Study 18.3 box is 180 x 390 km, with the long edge rotated 27 degrees counter-clockwise from vertical. The corners are defined to be:

South: (-121.51,35.52) West: (-123.48,38.66) North: (-121.62,39.39) East: (-119.71,36.22)

We are planning to run 869 sites, 838 of which are new, as part of this study.

These sites include:

- 77 cities (74 new)

- 10 new missions

- 139 CISN stations (136 new)

- 46 new sites of interest to PG&E

- 597 sites along a 10 km grid (571 new)

Of these sites, 32 overlap with the Study 17.3 region for verification.

A KML file with all these sites is available with names or without names.

Velocity Models

For Study 18.3, we are planning to query velocity models in the following order, to populate CyberShake volumes:

- USGS Bay Area model

- CVM-S4.26.M01

- CCA-06

- 1D background model

We will apply a minimum Vs of 500 m/s.

We will smooth to a distance of 10 km either side of a velocity model interface.

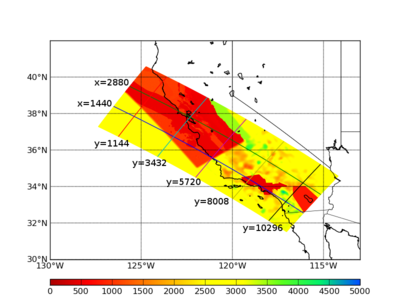

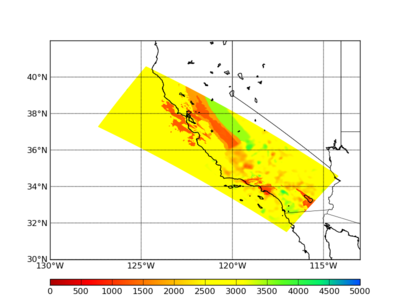

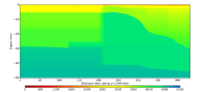

Vs cross-sections

We have extracted horizontal cross-sections at 0 km and 1 km. The 0 km cross-section also indicates the vertical cross-section locations.

We extracted vertical cross-sections along 5 cuts parallel to the x-axis:

- Y index=1144, (36.747700, -126.242500) to (40.009400, -123.599700)

- Y index=3432, (35.647400, -124.092900) to (38.866100, -121.389900)

- Y index=5720, (34.509900, -122.002600) to (37.682400, -119.251200)

- Y index=8008, (33.337800, -119.969500) to (36.461400, -117.180700)

- Y index=10296, (32.133500, -117.991100) to (35.205700, -115.175300)

The southeast edge is on the left side of the plots.

and 2 cuts parallel to the y-axis:

- X index=1440, (38.385500, -126.495800) to (32.548400, -116.106300)

- X index=2880, (39.481600, -125.625400) to (33.565800, -115.163000)

The northwestern edge is on the left of the plots.

Verification

Performance Enhancements (over Study 17.3)

Computational and Data Estimates

Computational Estimates

In producing the computational estimates, we selected the four N/S/E/W extreme sites in the box which 1)within the 200 km cutoff for southern SAF events (381 sites) and 2)were outside the cutoff (488 sites). We produced inside and outside averages and scaled these by the number of inside and outside sites.

We also modified the box to be at an angle of 30 degrees counterclockwise of vertical, which makes the boxes about 15% smaller than with the previously used angle of 55 degrees.

We scaled our results based on the Study 17.3 performance of site s975, a site also in Study 18.3, and the Study 15.4 performance of DBCN, which used a very large volume and 100m spacing.

| # Grid points | #VMesh gen nodes | Mesh gen runtime | # GPUs | SGT job runtime | SUs | |

|---|---|---|---|---|---|---|

| Inside cutoff, per site | 23.1 billion | 192 | 0.85 hrs | 800 | 1.35 hrs | 69.7k |

| Outside cutoff, per site | 10.2 billion | 192 | 0.37 hrs | 800 | 0.60 hrs | 30.8k |

| Total | 41.6M |

For the post-processing, we quantified the amount of work by determining the number of individual rupture points to process (summing, over all ruptures, the number of rupture variations for that rupture times the number of rupture surface points) and multiplying that by the number of timesteps. We then scaled based on performance of s975 from Study 17.3, and DBCN in Study 15.4.

Below we list the estimates for Blue Waters or Titan.

| #Points to process | #Nodes (BW) | BW runtime | BW node-hrs | #Nodes (Titan) | Titan runtime | Titan SUs | |

|---|---|---|---|---|---|---|---|

| Inside cutoff, per site | 5.96 billion | 120 | 9.32 hrs | 1120 | 240 | 10.3 hrs | 74.2k |

| Outside cutoff, per site | 2.29 billion | 120 | 3.57 hrs | 430 | 240 | 3.95 hrs | 28.5k |

| Total | 635K | 42.2M |

If, like Study 17.3, we plan to do all the SGT calculations on Titan and split the PP 25% Titan/75% Blue Waters, this would require 52.2M SUs on Titan, and 476K node-hrs on Blue Waters.

Currently we have 91.7M SUs available on Titan (expires 12/31/18), and 8.14M node-hrs on Blue Waters (expires 8/31/18).

Data Estimates

SGT size estimates are scaled based on the number of points to process.

| #Grid points | Velocity mesh | SGTs size | Temp data | Output data | |

|---|---|---|---|---|---|

| Inside cutoff, per site | 23.1 billion | 271 GB | 410 GB | 1090 GB | 19.1 GB |

| Outside cutoff, per site | 10.2 billion | 120 GB | 133 GB | 385 GB | 9.3 GB |

| Total | 158 TB | 216 TB | 589 TB | 11.6 TB |

If we plan on all the SGTs on Titan and split the PP 25% Titan, 75% Blue Waters, we will need:

Titan: 589 TB temp files + 3 TB output files = 592 TB

Blue Waters: 162 TB SGTs + 9 TB output files = 171 TB

SCEC storage: 1 TB workflow logs + 11.6 TB output data files = 12.6 TB(45 TB free)

Database usage: (4 rows PSA [@ 2, 3, 5, 10 sec] + 12 rows RotD [RotD100 and RotD50 @ 2, 3, 4, 5, 7.5, 10 sec])/rupture variation x 225K rupture variations/site x 869 sites = 3.1 billion rows x 125 bytes/row = 364 GB (2.0 TB free on moment.usc.edu disk)